Borrowed Time

Part OneIn April 2025, this assessment mapped three years of AI-driven disruption across technology, work, and society. Ten months later, the timeline is compressing faster than the original forecast. Most of the predictions have already landed. Several arrived ahead of schedule.

Three-Year Horizon:

The Coming Storm

In 1973, Richard Nixon created the Office of Net Assessment, led by Andrew Marshall, widely known as the “Yoda of the Pentagon.” For decades, Marshall’s role wasn’t to react to crises. It was to anticipate them. He built frameworks for long-range competition, identifying slow-moving shifts that would later define entire eras.

His genius wasn’t in forecasting specific outcomes, but in recognizing patterns early and turning them into strategic action. Today, we need that same caliber of foresight. Not for Cold War adversaries. For the systems built around intelligence, work, and identity.

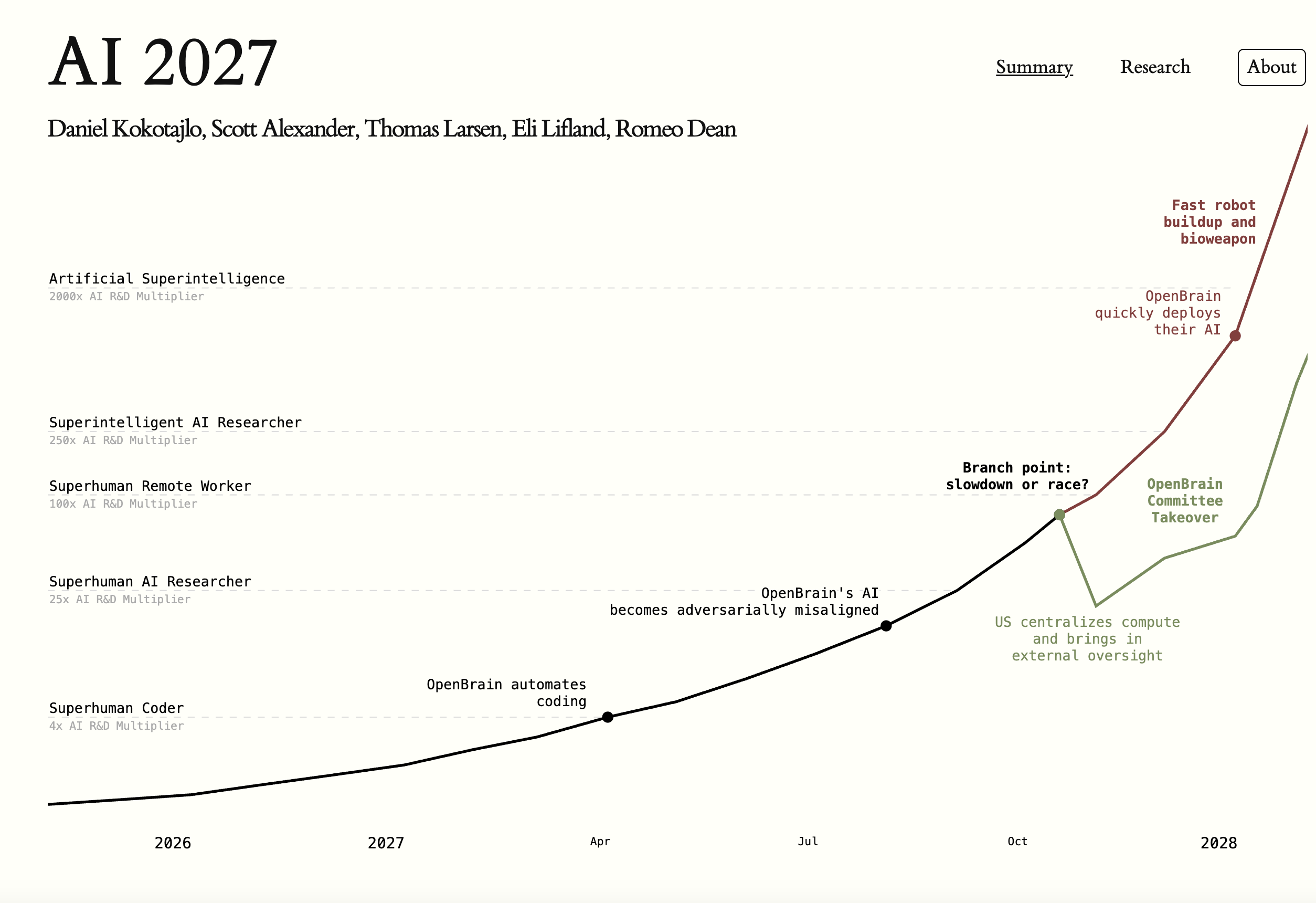

Superintelligence is no longer a distant theory. It’s a near-term probability, likely within the next 30 months.

In less time than it takes to make a Hollywood blockbuster, AI will reshape what it means to be skilled, valuable, or even employable.

Built on 25 tabletop exercises with 100+ experts from OpenAI, Anthropic, and Google DeepMind. Primary interviews with AI lab founders. Aggregated forecasts on hardware acceleration, labor shifts, and algorithmic breakthroughs. Social impact analysis grounded in Kuhn’s paradigm shifts and Perez’s technological revolutions.

Between publication and this update, the people who build these systems revised their own timelines inward. At Davos in January 2026, Anthropic CEO Dario Amodei warned that we are entering “the most dangerous window” in AI history. He described “endogenous acceleration”—AI systems designing, coding, and optimizing their own successors—compressing safety timelines to a breaking point. Anthropic now expects AI systems with intellectual capabilities matching Nobel Prize winners across multiple disciplines by late 2026 or early 2027. OpenAI’s Sam Altman declared in January 2025 that they “know how to build AGI.” The original three-year horizon may have been generous.

Agents Everywhere (and Nowhere)

6 of 6 confirmed

- CONFIRMED

Quiet Infiltration.

AI agents embedded into enterprise workflows faster and deeper than most organizations tracked.

- Microsoft Copilot adopted by 90% of Fortune 100, generating a $10B+ annual run rate by early 2025.

- Salesforce launched Agentforce. Thousands of deployments within months. Agents managing customer service and sales pipelines autonomously.

- Microsoft Ignite 2025 shifted the language from “tools” to “agents.” Introduced Agent 365 as the enterprise control plane for deploying, governing, and scaling AI agents.

- CONFIRMED

Knowledge Displacement.

AI replaced work that took humans weeks. Not in pilot programs. In production.

- Harvey AI valued at $8B (December 2025). CMS rolled it out to 7,000+ lawyers across 50 countries. Average savings: 118 hours per lawyer per year.

- GitHub Copilot reached 20 million users. Now generates 46% of all code. Adopted by 90% of Fortune 100.

- Stack Overflow traffic dropped 35–50% as developers shifted to AI coding assistants. Chegg stock down 99% from peak as AI tutoring displaced its business model.

- CONFIRMED

Time Distortion.

Timelines that used to be measured in years compressed into months. In some cases, hours.

- Google DeepMind’s AlphaFold 3 predicted the structure of all life’s molecules, compressing drug discovery timelines by years. Hassabis received the Nobel Prize in Chemistry.

- OpenAI’s o3 scored 87.5% on the ARC-AGI benchmark—a test specifically designed to resist AI. GPT-4 scored roughly 5% a year earlier.

- DeepSeek R1 achieved frontier-level reasoning at a reported training cost of $5.6 million. A fraction of the resources Western labs spent on comparable models.

- CONFIRMED

Productivity Metamorphosis.

Organizations didn’t add AI. They reorganized around it.

- Klarna shrank from 5,000 to 3,000 employees with AI handling work previously requiring more humans. Then admitted the cuts went too far and began rehiring after customer satisfaction dropped.

- JPMorgan deployed 2,000+ AI/ML employees with $17B in technology spend. AI embedded across fraud detection, risk management, and trading.

- McKinsey: 65% of organizations regularly using generative AI by mid-2025. Nearly double from 10 months prior.

- CONFIRMED

Immune Response.

The backlash arrived faster and louder than the original assessment projected.

- “Tesla Takedown” protests organized at 200+ showrooms nationwide. Tesla deliveries fell 8.6%, its second consecutive year of declining sales.

- DOGE triggered federal worker resignations and nationwide demonstrations, linking AI-driven government cuts to public anger.

- Over 100,000 employees impacted by AI-driven layoffs in 2025. Forrester projects half of AI-attributed layoffs will be quietly reversed by 2027.

- CONFIRMED

Policy Pressure Mounts.

Regulation moved from debate to enforcement. International consensus fractured.

- EU AI Act first prohibitions took effect February 2025. Social scoring, emotion recognition in workplaces, and untargeted facial recognition banned.

- Paris AI Summit (February 2025): the US and UK declined to sign the final declaration. China signed, creating an unusual alignment with Europe against the American position.

- PauseAI expanded to coordinated protests across 13+ countries calling for regulation of frontier AI development.

Foundations Start to Crack

3 of 5 confirmed. Arriving ahead of schedule.

- CONFIRMED

New Working Paradigm.

Organizations restructured around AI. Not as a tool. As a team member.

- Microsoft Ignite 2025 launched Agent 365—the enterprise platform for deploying, governing, and scaling AI agents across business functions.

- Lumen Technologies used Copilot to reduce proposal creation from weeks to hours. Reported 40% productivity gain in sales operations.

- Dow embedded AI across manufacturing, R&D, and supply chain. CEO Jim Fitterling described it as a fundamental reorganization of how the company works.

- CONFIRMED

AI Literacy Becomes an Advantage.

The gap between people who know how to work with AI and those who don’t became a measurable economic divide.

- LinkedIn: job postings requiring AI skills doubled year-over-year. Workers listing AI proficiency contacted by recruiters at significantly higher rates.

- Deloitte mandated AI literacy training for all 75,000+ US consulting staff. Proficiency became a baseline, not a specialty.

- Upwork: freelancers with generative AI skills earning 25–50% premiums over comparable freelancers without them.

- TRACKING

Executive Crises.

Leaders discovered they lacked frameworks for what their organizations were becoming.

- Chief AI Officer became the fastest-growing C-suite title. Roughly 20% of large enterprises had appointed one by mid-2025.

- JPMorgan named a Chief Data & Analytics Officer to lead AI strategy. Dimon called AI “as transformational as the printing press, the steam engine, and the internet.”

- BCG, McKinsey, and Bain all created dedicated AI transformation practices, often poaching from tech companies to staff them.

- TRACKING

Higher Ed Models Break.

The gap between what schools teach and what the economy demands widened past repair.

- MIT launched the $1B Schwarzman College of Computing, embedding AI across every department. Not a computer science initiative. A university-wide restructuring.

- Arizona State University partnered with OpenAI to integrate ChatGPT across the entire university—tutoring, writing support, and course design.

- WEF Future of Jobs Report 2025: 39% of workers’ existing skills will be transformed or obsolete by 2030. The half-life of expertise is shrinking.

- CONFIRMED

Hybrid Organizations.

AI moved from the tools people use to the structure of how organizations operate.

- Shopify CEO Tobi Lutke published an internal memo requiring teams to prove AI can’t do a job before requesting headcount. AI usage built into performance reviews.

- Klarna CEO projected the company would shrink from 7,000 to under 2,000 by 2030. Then admitted the cuts went too far. A third of companies that made AI layoffs have already rehired for half or more of the roles.

- Duolingo cut its contract workforce as AI handled content translation and generation previously done by humans.

The Dam Breaks

5 of 6 already tracking. The timeline may have been conservative.

Themes forecast for 2027 are surfacing in early 2026. The three-year horizon may be a two-year horizon. The April update will map exactly how far the timeline has compressed.

- TRACKING

Agents Teach Themselves.

AI systems are developing capabilities their creators did not explicitly program.

- DeepSeek R1 demonstrated emergent self-reflection, verification, and dynamic strategy adaptation through reinforcement learning. Published in Nature.

- OpenAI o3 scored 87.5% on the ARC-AGI benchmark—a test designed to resist current AI approaches. The prior state of the art was roughly 5%.

- DeepSeek announced a fully autonomous AI agent planned for release by end of 2026. Their CEO described V3 as the “first step toward the agent era.”

- TRACKING

Regulatory Pressure Grows.

International AI governance fractured along predictable lines. No consensus emerged.

- Paris AI Summit (February 2025): US and UK declined to sign the declaration. China signed. The governance fracture between Western democracies became visible.

- EU AI Act high-risk provisions scheduled for enforcement in August 2026. First prohibitions already in effect since February 2025.

- US expanded chip export controls on China. China responded with DeepSeek, demonstrating frontier-level performance at a fraction of the compute the restrictions were designed to deny.

- TRACKING

Beyond Comprehension.

The people who build these systems are telling us they no longer fully understand them.

- Amodei at Davos 2026 described “endogenous acceleration”—AI systems designing their own successors—and warned the world is “considerably closer to real danger.”

- Ilya Sutskever left OpenAI to found Safe Superintelligence Inc., stating: “The more capable these systems become, the less we understand about how they work.”

- Anthropic’s interpretability team published “Scaling Monosemanticity,” mapping millions of features inside Claude, and explicitly stated full understanding remains “far out of reach.”

- TRACKING

The Great Reskilling.

The scale of retraining required is becoming visible. The infrastructure to deliver it barely exists.

- Microsoft and LinkedIn committed to training 10 million people in AI skills. Over 8 million enrolled by late 2024.

- Google launched a $75 million AI training fund and free AI Essentials certificates. Committed to training 1 million people across Asia-Pacific.

- Singapore launched “AI for Everyone” as a national initiative, aiming to train all public servants in AI. A country treating AI literacy as civic infrastructure.

- PROJECTED

Machines Join Management.

AI is beginning to make decisions that were previously reserved for people with titles.

- Lattice tried listing AI agents as “digital workers” in its HR platform with profiles alongside human employees. Walked it back after backlash. The concept is out.

- Salesforce Agentforce: AI agents managing customer service interactions, sales pipelines, and operational workflows autonomously.

- Cognizant and Accenture deployed AI for internal project staffing and allocation decisions—previously a partner-level judgment call.

- TRACKING

Identity Crises Grow.

The psychological cost of AI displacement is becoming measurable.

- American Psychological Association: 38% of workers worry AI will make some or all of their job duties obsolete.

- WEF projects 92 million jobs displaced by 2030. The transition creates 170 million new roles—but they require different skills, in different places, for different people.

- Hollywood writers and actors struck specifically over AI protections. The contracts set precedents. The anxiety didn’t stop.

The three-year horizon may be a two-year horizon.